1 Overview of GlassFish Server Performance Tuning

You can significantly improve performance of the Oracle GlassFish Server and of applications deployed to it by adjusting a few deployment and server configuration settings. However, it is important to understand the environment and performance goals. An optimal configuration for a production environment might not be optimal for a development environment.

The following topics are addressed here:

Process Overview

The following table outlines the overall GlassFish Server 4.0 administration process, and shows where performance tuning fits in the sequence.

Table 1-1 Performance Tuning Roadmap

| Step | Description of Task | Location of Instructions |

|---|---|---|

1 |

Design: Decide on the high-availability topology and set up GlassFish Server. |

|

2 |

Capacity Planning: Make sure the systems have sufficient resources to perform well. |

|

3 |

Installation: Configure your DAS, clusters, and clustered server instances. |

|

4 |

Deployment: Install and run your applications. Familiarize yourself with how to configure and administer the GlassFish Server. |

|

5 |

High Availability Configuration: Configuring your DAS, clusters, and clustered server instances for high availability and failover |

|

6 |

Performance Tuning: Tune the following items:

|

Performance Tuning Sequence

Application developers should tune applications prior to production use. Tuning applications often produces dramatic performance improvements. System administrators perform the remaining steps in the following list after tuning the application, or when application tuning has to wait and you want to improve performance as much as possible in the meantime.

Ideally, follow this sequence of steps when you are tuning performance:

-

Tune your application, described in Tuning Your Application.

-

Tune the server, described in Tuning the GlassFish Server.

-

Tune the Java runtime system, described in Tuning the Java Runtime System.

-

Tune the operating system, described in Tuning the Operating System and Platform.

Understanding Operational Requirements

Before you begin to deploy and tune your application on the GlassFish Server, it is important to clearly define the operational environment. The operational environment is determined by high-level constraints and requirements such as:

Application Architecture

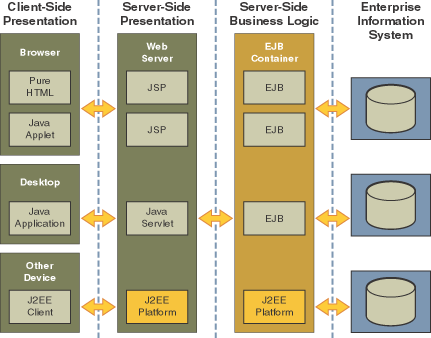

The Java EE Application model, as shown in the following figure, is very flexible; allowing the application architect to split application logic functionally into many tiers. The presentation layer is typically implemented using servlets and JSP technology and executes in the web container.

Moderately complex enterprise applications can be developed entirely using servlets and JSP technology. More complex business applications often use Enterprise JavaBeans (EJB) components. The GlassFish Server integrates the Web and EJB containers in a single process. Local access to EJB components from servlets is very efficient. However, some application deployments may require EJB components to execute in a separate process; and be accessible from standalone client applications as well as servlets. Based on the application architecture, the server administrator can employ the GlassFish Server in multiple tiers, or simply host both the presentation and business logic on a single tier.

It is important to understand the application architecture before designing a new GlassFish Server deployment, and when deploying a new business application to an existing application server deployment.

Security Requirements

Most business applications require security. This section discusses security considerations and decisions.

User Authentication and Authorization

Application users must be authenticated. The GlassFish Server provides a number of choices for user authentication, including file-based, administration, LDAP, certificate, JDBC, digest, PAM, Solaris, and custom realms.

The default file based security realm is suitable for developer environments, where new applications are developed and tested. At deployment time, the server administrator can choose between the Lighweight Directory Access Protocol (LDAP) or Solaris security realms. Many large enterprises use LDAP-based directory servers to maintain employee and customer profiles. Small to medium enterprises that do not already use a directory server may find it advantageous to leverage investment in Solaris security infrastructure.

For more information on security realms, see "Administering Authentication Realms" in GlassFish Server Open Source Edition Security Guide.

The type of authentication mechanism chosen may require additional

hardware for the deployment. Typically a directory server executes on a

separate server, and may also require a backup for replication and high

availability. Refer to the

Oracle

Java System Directory Server

(http://www.oracle.com/us/products/middleware/identity-management/oracle-directory-services/index.html)

documentation for more information on deployment, sizing, and

availability guidelines.

An authenticated user’s access to application functions may also need authorization checks. If the application uses the role-based Java EE authorization checks, the application server performs some additional checking, which incurs additional overheads. When you perform capacity planning, you must take this additional overhead into account.

Encryption

For security reasons, sensitive user inputs and application output must be encrypted. Most business-oriented web applications encrypt all or some of the communication flow between the browser and GlassFish Server. Online shopping applications encrypt traffic when the user is completing a purchase or supplying private data. Portal applications such as news and media typically do not employ encryption. Secure Sockets Layer (SSL) is the most common security framework, and is supported by many browsers and application servers.

The GlassFish Server supports SSL 2.0 and 3.0 and contains software support for various cipher suites. It also supports integration of hardware encryption cards for even higher performance. Security considerations, particularly when using the integrated software encryption, will impact hardware sizing and capacity planning.

Consider the following when assessing the encryption needs for a deployment:

-

What is the nature of the applications with respect to security? Do they encrypt all or only a part of the application inputs and output? What percentage of the information needs to be securely transmitted?

-

Are the applications going to be deployed on an application server that is directly connected to the Internet? Will a web server exist in a demilitarized zone (DMZ) separate from the application server tier and backend enterprise systems?

A DMZ-style deployment is recommended for high security. It is also useful when the application has a significant amount of static text and image content and some business logic that executes on the GlassFish Server, behind the most secure firewall. GlassFish Server provides secure reverse proxy plugins to enable integration with popular web servers. The GlassFish Server can also be deployed and used as a web server in DMZ. -

Is encryption required between the web servers in the DMZ and application servers in the next tier? The reverse proxy plugins supplied with GlassFish Server support SSL encryption between the web server and application server tier. If SSL is enabled, hardware capacity planning must be take into account the encryption policy and mechanisms.

-

If software encryption is to be employed:

-

What is the expected performance overhead for every tier in the system, given the security requirements?

-

What are the performance and throughput characteristics of various choices?

-

For information on how to encrypt the communication between web servers and GlassFish Server, see "Administering Message Security" in GlassFish Server Open Source Edition Security Guide.

High Availability Clustering, Load Balancing, and Failover

GlassFish Server 4.0 enables multiple GlassFish Server instances to be clustered to provide high availability through failure protection, scalability, and load balancing.

High availability applications and services provide their functionality continuously, regardless of hardware and software failures. To make such reliability possible, GlassFish Server 4.0 provides mechanisms for maintaining application state data between clustered GlassFish Server instances. Application state data, such as HTTP session data, stateful EJB sessions, and dynamic cache information, is replicated in real time across server instances. If any one server instance goes down, the session state is available to the next failover server, resulting in minimum application downtime and enhanced transactional security.

GlassFish Server provides the following high availability features:

-

High Availability Session Persistence

-

High Availability Java Message Service

-

RMI-IIOP Load Balancing and Failover

See Tuning High Availability Persistence for high availability persistence tuning recommendations.

See the GlassFish Server Open Source Edition High Availability Administration Guide for complete information about configuring high availability clustering, load balancing, and failover features in GlassFish Server 4.0.

Hardware Resources

The type and quantity of hardware resources available greatly influence performance tuning and site planning.

GlassFish Server provides excellent vertical scalability. It can scale to efficiently utilize multiple high-performance CPUs, using just one application server process. A smaller number of application server instances makes maintenance easier and administration less expensive. Also, deploying several related applications on fewer application servers can improve performance, due to better data locality, and reuse of cached data between co-located applications. Such servers must also contain large amounts of memory, disk space, and network capacity to cope with increased load.

GlassFish Server can also be deployed on large "farms" of relatively modest hardware units. Business applications can be partitioned across various server instances. Using one or more external load balancers can efficiently spread user access across all the application server instances. A horizontal scaling approach may improve availability, lower hardware costs and is suitable for some types of applications. However, this approach requires administration of more application server instances and hardware nodes.

Administration

A single GlassFish Server installation on a server can encompass multiple instances. A group of one or more instances that are administered by a single Administration Server is called a domain. Grouping server instances into domains permits different people to independently administer the groups.

You can use a single-instance domain to create a "sandbox" for a particular developer and environment. In this scenario, each developer administers his or her own application server, without interfering with other application server domains. A small development group may choose to create multiple instances in a shared administrative domain for collaborative development.

In a deployment environment, an administrator can create domains based on application and business function. For example, internal Human Resources applications may be hosted on one or more servers in one Administrative domain, while external customer applications are hosted on several administrative domains in a server farm.

GlassFish Server supports virtual server capability for web applications. For example, a web application hosting service provider can host different URL domains on a single GlassFish Server process for efficient administration.

For detailed information on administration, see the GlassFish Server Open Source Edition Administration Guide.

General Tuning Concepts

Some key concepts that affect performance tuning are:

-

User load

-

Application scalability

-

Margins of safety

The following table describes these concepts, and how they are measured in practice. The left most column describes the general concept, the second column gives the practical ramifications of the concept, the third column describes the measurements, and the right most column describes the value sources.

Table 1-2 Factors That Affect Performance

| Concept | In practice | Measurement | Value sources |

|---|---|---|---|

User Load |

Concurrent sessions at peak load |

Transactions Per Minute (TPM) Web Interactions Per Second (WIPS) |

(Max. number of concurrent users) * (expected response time) / (time between clicks) Example: (100 users * 2 sec) / 10 sec = 20 |

Application Scalability |

Transaction rate measured on one CPU |

TPM or WIPS |

Measured from workload benchmark. Perform at each tier. |

Vertical scalability |

Increase in performance from additional CPUs |

Percentage gain per additional CPU |

Based on curve fitting from benchmark. Perform tests while gradually increasing the number of CPUs. Identify the "knee" of the curve, where additional CPUs are providing uneconomical gains in performance. Requires tuning as described in this guide. Perform at each tier and iterate if necessary. Stop here if this meets performance requirements. |

Horizontal scalability |

Increase in performance from additional servers |

Percentage gain per additional server process and/or hardware node. |

Use a well-tuned single application server instance, as in previous step. Measure how much each additional server instance and hardware node improves performance. |

Safety Margins |

High availability requirements |

If the system must cope with failures, size the system to meet performance requirements assuming that one or more application server instances are non functional |

Different equations used if high availability is required. |

+ |

Excess capacity for unexpected peaks |

It is desirable to operate a server at less than its benchmarked peak, for some safety margin |

80% system capacity utilization at peak loads may work for most installations. Measure your deployment under real and simulated peak loads. |

Capacity Planning

The previous discussion guides you towards defining a deployment architecture. However, you determine the actual size of the deployment by a process called capacity planning. Capacity planning enables you to predict:

-

The performance capacity of a particular hardware configuration.

-

The hardware resources required to sustain specified application load and performance.

You can estimate these values through careful performance benchmarking, using an application with realistic data sets and workloads.

To Determine Capacity

-

Determine performance on a single CPU.

First determine the largest load that a single processor can sustain. You can obtain this figure by measuring the performance of the application on a single-processor machine. Either leverage the performance numbers of an existing application with similar processing characteristics or, ideally, use the actual application and workload in a testing environment. Make sure that the application and data resources are tiered exactly as they would be in the final deployment.

Determine how much additional performance you gain when you add processors. That is, you are indirectly measuring the amount of shared resource contention that occurs on the server for a specific workload. Either obtain this information based on additional load testing of the application on a multiprocessor system, or leverage existing information from a similar application that has already been load tested.

Running a series of performance tests on one to eight CPUs, in

incremental steps, generally provides a sense of the vertical

scalability characteristics of the system. Be sure to properly tune the

application, GlassFish Server, backend database resources, and operating

system so that they do not skew the results.

3. Determine horizontal scalability.

If sufficiently powerful hardware resources are available, a single

hardware node may meet the performance requirements. However for better

availability, you can cluster two or more systems. Employing external

load balancers and workload simulation, determine the performance

benefits of replicating one well-tuned application server node, as

determined in step 2.

User Expectations

Application end-users generally have some performance expectations. Often you can numerically quantify them. To ensure that customer needs are met, you must understand these expectations clearly, and use them in capacity planning.

Consider the following questions regarding performance expectations:

-

What do users expect the average response times to be for various interactions with the application? What are the most frequent interactions? Are there any extremely time-critical interactions? What is the length of each transaction, including think time? In many cases, you may need to perform empirical user studies to get good estimates.

-

What are the anticipated steady-state and peak user loads? Are there are any particular times of the day, week, or year when you observe or expect to observe load peaks? While there may be several million registered customers for an online business, at any one time only a fraction of them are logged in and performing business transactions. A common mistake during capacity planning is to use the total size of customer population as the basis and not the average and peak numbers for concurrent users. The number of concurrent users also may exhibit patterns over time.

-

What is the average and peak amount of data transferred per request? This value is also application-specific. Good estimates for content size, combined with other usage patterns, will help you anticipate network capacity needs.

-

What is the expected growth in user load over the next year? Planning ahead for the future will help avoid crisis situations and system downtimes for upgrades.

Further Information

-

For more information on Java performance, see Java Performance Documentation (

http://java.sun.com/docs/performance) and Java Performance BluePrints (http://java.sun.com/blueprints/performance/index.html). -

For more information about performance tuning for high availability configurations, see the GlassFish Server Open Source Edition High Availability Administration Guide.

-

For complete information about using the Performance Tuning features available through the GlassFish Server Administration Console, refer to the Administration Console online help.

-

For details on optimizing EJB components, see Seven Rules for Optimizing Entity Beans (

http://java.sun.com/developer/technicalArticles/ebeans/sevenrules/) -

For details on profiling, see "Profiling Tools" in GlassFish Server Open Source Edition Application Development Guide.

-

To view a demonstration video showing how to use the GlassFish Server Performance Tuner, see the Oracle GlassFish Server 3.1 - Performance Tuner demo (

http://www.youtube.com/watch?v=FavsE2pzAjc). -

To find additional Performance Tuning development information, see the Performance Tuner in Oracle GlassFish Server 3.1 (

http://blogs.oracle.com/jenblog/entry/performance_tuner_in_oracle_glassfish) blog.